By Shin'ya Ueoka (@ueokande), Hirotaka Yamamoto (@ymmt2005)

As part of Project Neco, we are building a highly scalable data center network for large Kubernetes clusters.

This is the first of the series of articles to describe the network implementation of Neco. In this article, we describe the challenges and our approaches to them.

For the impatient, this is tl; dr.

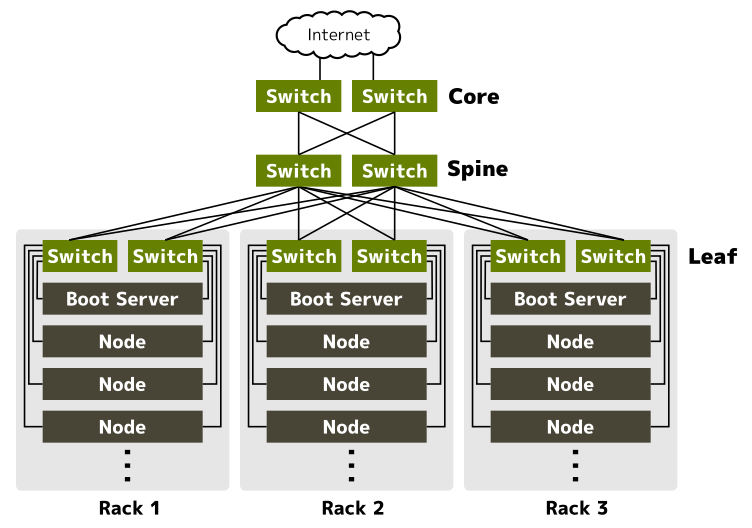

- Leaf-spine topology is employed for high scalability.

- For redundancy, each rack equips two top-of-rack switches and each server has two NIC ports.

- BGP alongside BFD is used to implement redundant server connectivity. No NIC bonding/teaming is involved.

- We created a new CNI plugin named Coil that can work with MetalLB and Calico.

Challenges

There are some challenges to build a highly scalable, fault-tolerant, yet versatile network for Kubernetes in an on-premise data center: 1) large east-west traffics, 2) vendor-neutral redundant network connectivity, and 3) modular design to assemble good parts. Let me explain one by one.

The first challenge is to build a highly scalable network, especially for east-west traffics. In the Kubernetes network model, IP addresses of Pods (containers) are dynamically assigned, and therefore communications between Pods cross rack boundaries in general. This type of communication is called east-west traffic. As a Kubernetes cluster gets larger, pods and therefore east-west traffics get increased.

The second is how to make network connectivity for servers redundant. Traditional approaches include Spanning Tree Protocol (STP) and Multi-Chassis Link Aggregation (MC-LAG). STP is a legacy and not designed for redundancy but to protect from loops. MC-LAG typically involves vendor-dependent technologies. It would be great if a server can have redundant network links without these technologies.

The third challenge is very specific to the design of Calico and MetalLB. Calico is a widely-used Kubernetes network plugin that can be configured to use BGP for the best performance. MetalLB also can (and should) be configured to use BGP to provide a LoadBalancer implementation for Kubernetes. Unfortunately, there is a problem when both are configured to use BGP largely because Calico embeds BIRD, a software BGP router, and does not allow configurations needed to work with MetalLB.

Decision drivers

We wanted to make Neco network as vendor-neutral, good in performance, and easy to scale-out.

By eliminating vendor-dependent technologies, we can choose cost-effective switches. Moreover, we can simulate and test the data center network easily with open source software such as BIRD.

Performance is important because it is one of the reasons to run on-premise data centers. Scalability is also important because we expect a Neco data center will have thousands of servers.

Leaf-spine topology and use of BGP

The first decision we made was to employ leaf-spine network topology and use BGP to exchange routing information. With leaf-spine topology, every inter-rack communication can be done with constant hops. It also can be easily scaled-out by just adding spine switches. So leaf-spine is best suited to our needs.

BGP, or Border Gateway Protocol version 4, is the routing protocol for the Internet. Its proven scalability and fault-tolerance are also good for large on-premise data centers. Another reason to use BGP is we want to use MetalLB to implement LoadBalancer for Kubernetes. MetalLB works best when used with BGP.

Leaf switches are placed in the data center as top-of-rack (ToR) switches. Since communications between leaf and spine are done in layer 3, layer 2 broadcast domains are limited within each rack.

BGP and BFD for redundant server connectivity

A data center rack usually can hold about 30 servers. If there is only one ToR switch per rack, a single switch failure would make all servers in the rack inaccessible. To avoid this, each rack is equipped with two ToR switches, and each server connects to both.

On the server-side, the operating system should be configured to use the two connections to make connectivity redundant. As described in Challenges, STP and MC-LAG are excluded from options. We decided to use BGP on each server to utilize two connections because BGP is used elsewhere in Neco network and there are good open-source BGP implementations on Linux.

That said, using BGP alone has several problems. One thing is that BGP takes minutes to converge routes after a connection fails. Another problem is that, unlike switches, servers need to be an endpoint, and such an endpoint should have a reachable IP address from other servers even when one connection is not available.

To minimize the convergence time, we use BFD alongside BGP. BFD is a protocol to detect link failure very quickly (often within 100 milliseconds) by sending heartbeats from both sides. BFD is a proposed standard as in RFC5880 and widely implemented in various switches/software.

To assign an IP address that keeps reachable from other servers, a so-called management IP address with /32 netmask is assigned to each server. The management address is advertised via BGP. The following diagram illustrates the connections and IP addresses between ToR switches and servers.

By registering routes from two ToR switches as Equal-Cost Multi-Path (ECMP) routes to Linux kernel, this implementation can utilize both connections actively.

Testing the network

Since the network architecture adopts only open standard protocols, the network can be implemented virtually using Linux network stack and routing software BIRD. In fact, we have implemented the network including leaf-spine switches in a virtual environment and tested its functionalities.

The following console output is an excerpt from a server in the virtual test environment.

The server has two NIC ports, namely, eth0 and eth1 that are connected to ToR switches. In addition, the server has a virtual NIC named node0 which is given an IP address 10.69.0.4/32. This is the management address that is reachable from other servers.

The routing table of the server has two ECMP routes to another host whose address is 10.69.1.133/32.

cybozu@rack0-worker4 ~ $ ip -4 addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

inet 10.69.0.68/26 brd 10.69.0.127 scope link eth0

valid_lft forever preferred_lft forever

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

inet 10.69.0.132/26 brd 10.69.0.191 scope link eth1

valid_lft forever preferred_lft forever

4: node0: <BROADCAST,NOARP,UP,LOWER_UP> mtu 1500 qdisc noqueue state UNKNOWN group default qlen 1000

inet 10.69.0.4/32 scope global node0

valid_lft forever preferred_lft forever

cybozu@rack0-worker4 ~ $ ip -4 route

...

10.69.1.133 proto bird metric 32

nexthop via 10.69.0.65 dev eth0 weight 1

nexthop via 10.69.0.129 dev eth1 weight 1

...

Next is a screen capture that shows instant route withdrawal when one of the hosts goes down.

rack0-worker4 and rack1-worker4 are two hosts belonging to different racks.

Initially, the routing table of rack1-worker4 has an entry for the management address of rack0-worker4.

When the virtual NIC of rack0-worker4 is shut down, the routing entry in rack1-worker4 disappears instantly.

Coil, a modular CNI plugin

As we have seen so far, each server runs a BGP/BFD service like BIRD for redundant network connectivity. Naturally, we wanted to let this BGP service advertise routing information of Kubernetes Pods.

So we created a new CNI plugin named Coil. Coil can be combined with any routing software because it exports routing information to be advertised to a Linux kernel routing table. Routing software, or a helper program, can import routing information from that table and advertise them to other servers.

We will talk about deeper details about Coil, and how to combine it with BIND, MetalLB, and Calico in another article.

Summary

We have designed and implemented a large-scale data center network for Kubernetes. The network employs leaf-spine topology and BGP to be more scalable for east-west traffics.

For servers, we use BGP alongside BFD to make network connectivity redundant. By registering ECMP routes to Linux kernel, a server can use all of its network links actively.

The network can be implemented and tested in a virtual environment using Linux network stacks and open-source software BIRD because no vendor-dependent technologies are used.

This article does not talk about implementation details. Please dive into Modular, Pure Layer 3 Network for Kubernetes: The implementation to learn the implementations.